This is a post going into the usefulness of live voice-chat tools in distributed teams.

If you've ever seen the Leeeeeroooooyy Jeeeenkiiins video of World of Warcraft fame, you've heard this kind of tool in action. It's how the participants in the video are speaking with each other - this is not a feature built into the World of Warcraft game - it's a separate team-oriented VoIP software, and it's all about letting gamers communicate orally while gaming.

Since these tools are for gamers, they have to be

A few years ago, when I joined eyeo (a distributed company), several of the operations team were avid gamers, and had a TeamSpeak server set up between them to allow voice chat during online gaming sessions (great team building option for distributed teams, by the way).

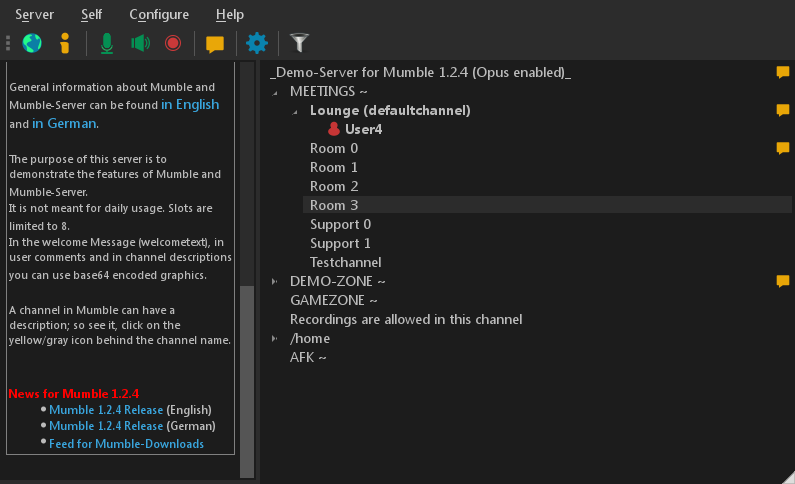

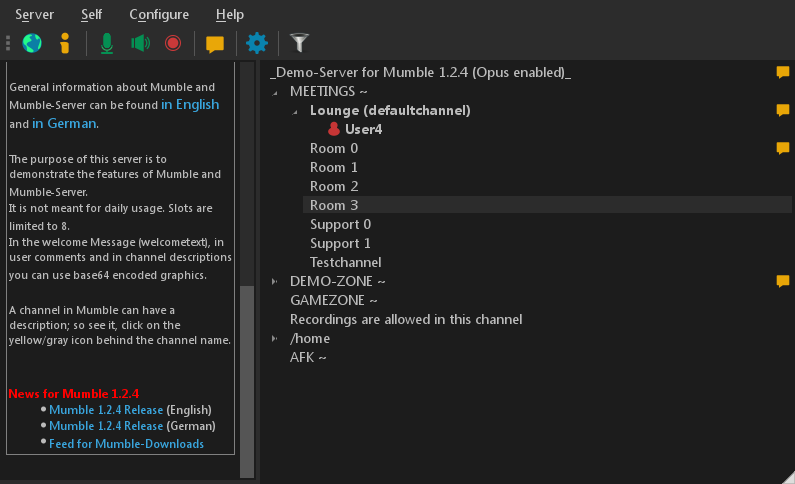

As the team was growing, we began experimenting with using this service for team communication in between meetings (we switched to Mumble at some point, so I'll refer to it as Mumble from now on).

Mumble turned out to hit a pretty sweet spot compared to our conventional video conferencing software (we use BlueJeans):

A video conference needs many clicks to fire up and get everyone dialed in.

Mumble, on the other hand, has the team constantly connected, so there's one click required to start speaking.

The usual scenario would go like this:

1) I ping someone on IRC, asking a question

2) We go back and forth in writing for a few lines

3) One of us would ask "mumble?"

4) We both un-mute ourselves in Mumble and start speaking

5) Others may listen in, and join the conversation if they feel like it, or move to a Busy channel if they want to focus on other work.

The video conferencing software will take up all bandwidth and resources

Being optimized to imitate physical presence, the video conferencing software will exploit your laptop's resources to max quality, sometimes with devastating effects (especially on Linux).

Mumble, on the other hand, sacrifices video completely and focuses on voice only. It is optimized to save resources, so you barely notice it is running.

Video conferences will not work for poor connections (or require you dial in via expensive phone numbers).

Mumble on the other hand, works excellently on poor connections. Latency is marvelously low, which can be tested with the "ping pong test":

1) You say "ping"

2) The other person says "pong" when they hear you

3) You say "ping" when you hear their "pong"

4) Repeat until you get a feel of how long a roundtrip takes

In our video conferencing system, a roundtrip takes about 1-2 seconds (!).

In Mumble, it's like being next to the other person You can barely notice the latency, even when speaking to colleagues halfway around the world (last Friday I did the ping test with one colleague in Yekaterinburg and the other in India).

I think this difference in latency may have a HUGE effect on natural discussions in larger distributed groups, but that's a theory for another day.

So, as it turns out, the things that make gamers love Mumble may also let distributed teams love Mumble.

If you want to try this out with your team right now, you may be better off just starting with Discord, as it is a free and no-hassle setup. At eyeo we are pretty adamant on things like security and privacy, however, so we're glad we can use the awesome open-source Mumble on our own servers.

If you've ever seen the Leeeeeroooooyy Jeeeenkiiins video of World of Warcraft fame, you've heard this kind of tool in action. It's how the participants in the video are speaking with each other - this is not a feature built into the World of Warcraft game - it's a separate team-oriented VoIP software, and it's all about letting gamers communicate orally while gaming.

Since these tools are for gamers, they have to be

- fast (low latency)

- light (as not to steal CPU-cycles from heavy games graphics)

- moderate in bandwidth usage (as not to affect the game server connection)

A few years ago, when I joined eyeo (a distributed company), several of the operations team were avid gamers, and had a TeamSpeak server set up between them to allow voice chat during online gaming sessions (great team building option for distributed teams, by the way).

As the team was growing, we began experimenting with using this service for team communication in between meetings (we switched to Mumble at some point, so I'll refer to it as Mumble from now on).

Mumble turned out to hit a pretty sweet spot compared to our conventional video conferencing software (we use BlueJeans):

A video conference needs many clicks to fire up and get everyone dialed in.

Mumble, on the other hand, has the team constantly connected, so there's one click required to start speaking.

The usual scenario would go like this:

1) I ping someone on IRC, asking a question

2) We go back and forth in writing for a few lines

3) One of us would ask "mumble?"

4) We both un-mute ourselves in Mumble and start speaking

5) Others may listen in, and join the conversation if they feel like it, or move to a Busy channel if they want to focus on other work.

The video conferencing software will take up all bandwidth and resources

Being optimized to imitate physical presence, the video conferencing software will exploit your laptop's resources to max quality, sometimes with devastating effects (especially on Linux).

Mumble, on the other hand, sacrifices video completely and focuses on voice only. It is optimized to save resources, so you barely notice it is running.

Video conferences will not work for poor connections (or require you dial in via expensive phone numbers).

Mumble on the other hand, works excellently on poor connections. Latency is marvelously low, which can be tested with the "ping pong test":

1) You say "ping"

2) The other person says "pong" when they hear you

3) You say "ping" when you hear their "pong"

4) Repeat until you get a feel of how long a roundtrip takes

In our video conferencing system, a roundtrip takes about 1-2 seconds (!).

In Mumble, it's like being next to the other person You can barely notice the latency, even when speaking to colleagues halfway around the world (last Friday I did the ping test with one colleague in Yekaterinburg and the other in India).

I think this difference in latency may have a HUGE effect on natural discussions in larger distributed groups, but that's a theory for another day.

So, as it turns out, the things that make gamers love Mumble may also let distributed teams love Mumble.

If you want to try this out with your team right now, you may be better off just starting with Discord, as it is a free and no-hassle setup. At eyeo we are pretty adamant on things like security and privacy, however, so we're glad we can use the awesome open-source Mumble on our own servers.

Comments

Post a Comment